More highlights from SEG

/On Monday I wrote that this year's Annual Meeting seemed subdued. And so it does... but as SEG continued this week, I started hearing some positive things. Vendors seemed pleasantly surprised that they had made some good contacts, perhaps as many as usual. The technical program was as packed as ever. And of course the many students here seemed to be enjoying themselves as much as ever. (New Orleans might be the coolest US city I've been to; it reminds me of Montreal. Sorry Austin.)

Quieter acquisition

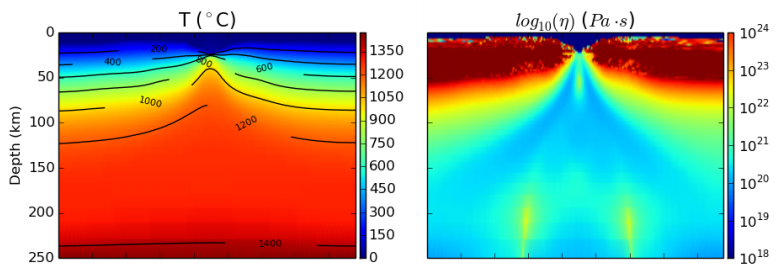

Pramik et al. (of Geokinetics) reported on a new marine vibrator acquisition using their AquaVib source. This instrument has been around for a while, indeed it was first tested over 20 years ago by IVI and later Geco (e.g. see J Bird, TLE, June 2003). If perfected, it will allow for much quieter marine seismic acquisition, reducing harm to marine mammals, with no loss of quality (images below from their abstract and their copyright with SEG):

Ben told me one of his favourite talks was Schostak & Jenkerson with a report from a JIP (Shell, ExxonMobil, Total, and Texas A&M) trying to build a new marine vibrator. Three designs are being tested by the current consortium, respectively manufactured by PGS with an electrical model, APS with a mechanical piston, and Teledyne with a bubble resonator.

In other news:

- Talks at Dallas 2016 will only be 15 minutes long. Hopefully this is to allow room in the schedule for something else, not just more talks.

- Dave Hale has retired from Colorado School of Mines, and apparently now 'writes software with Dean Witte'. So watch out for that!

- A sure sign of industry austerity: "Would you like Bud Light, or Miller Light?"

- Check out the awesome ribbons that some clever student thought of. I'm definitely pinching that idea.

That's all I have for now, and I'm flying home today so that's it for SEG 2015. I will be reporting on the hackathon soon I promise, and I'll try to get my paper on Pick This recorded next week (but here's a sneak peek). Stay tuned!

References

Bill Pramik, M. Lee Bell, Adam Grier, and Allen Lindsay (2015) Field testing the AquaVib: an alternate marine seismic source. SEG Technical Program Expanded Abstracts 2015: pp. 181-185. doi: 10.1190/segam2015-5925758.1

Brian Schostak* and Mike Jenkerson (2015) The Marine Vibrator Joint Industry Project. SEG Technical Program Expanded Abstracts 2015: pp. 4961-4962. doi: 10.1190/segam2015-6026289.1

Except where noted, this content is licensed

Except where noted, this content is licensed