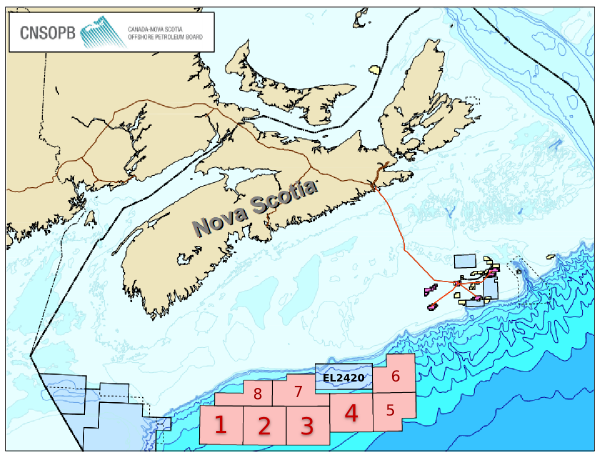

Location, location, location

/ A quiz: how many pieces of information do you need to accurately and unambiguously locate a spot on the earth?

A quiz: how many pieces of information do you need to accurately and unambiguously locate a spot on the earth?

It depends a bit if we're talking about locations on a globe, in which case we can use latitude and longitude, or locations on a map, in which case we will need coordinates and a projection too. Since maps are flat, we need a transformation from the curved globe into flatland — a projection.

So how many pieces of information do we need?

The answer is surprising to many people. Unless you deal with spatial data a lot, you may not realize that latitude and longitude are not enough to locate you on the earth. Likewise for a map, an easting (or x coordinate) and northing (y) are insufficient, even if you also give the projection, such as the Universal Transverse Mercator zone (20T for Nova Scotia). In each case, the missing information is the datum.

Why do we need a datum? It's similar to the problem of measuring elevation. Where will you measure it from? You can use 'sea-level', but the sea moves up and down in complicated tidal rhythms that vary geographically and temporally. So we concoct synthetic datums like Mean Sea Level, or Mean High Water, or Mean Higher High Water, or... there are 17 to choose from! To try to simplify things, there are standards like the North American Vertical Datum of 1988, but it's important to recognize that these are human constructs: sea-level is simply not static, spatially or temporally.

To give coordinates faithfully, we need a standard grid. Cartesian coordinates plotted on a piece of paper are straightforward: the paper is flat and smooth. But the earth's sphere is not flat or smooth at any scale. So we construct a reference ellipsoid, and then locate that ellipsoid on the earth. Together, these references make a geodetic datum. When we give coordinates, whether it's geographic lat–long or cartographic x–y, we must also give the datum. Without it, the coordinates are ambiguous.

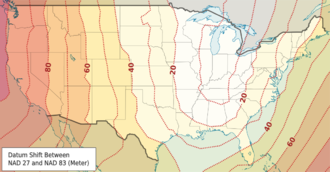

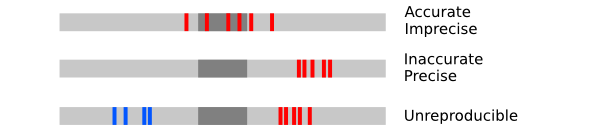

How ambiguous are they? It depends how much accuracy you need! If you're trying to locate a city, the differences are small — two important datums, NAD27 and NAD83, are different by up to about 80 m for most of North America. But 80 m is a long way when you're shooting seismic or drilling a well.

How ambiguous are they? It depends how much accuracy you need! If you're trying to locate a city, the differences are small — two important datums, NAD27 and NAD83, are different by up to about 80 m for most of North America. But 80 m is a long way when you're shooting seismic or drilling a well.

What are these datums then? In North America, especially in the energy business, we need to know three:

NAD27 — North American Datum of 1927, Based on the Clarke 1866 ellipsoid and fixed on Meades Ranch, Kansas. This datum is very commonly used in the oilfield, even today. The complexity and cost of moving to NAD83 is very large, and will probably happen v e r y s l o w l y. In case you need it, here's an awesome tool for converting between datums.

NAD83 — North American Datum of 1983, based on the GRS 80 ellipsoid and fixed using a gravity field model. This datum is also commonly seen in modern survey data — watch out if the rest of your project is NAD27! Since most people don't know the datum is important and therefore don't report it, you may never know the datum for some of your data.

WGS84 — World Geodetic System of 1984, based on the 1996 Earth Gravitational Model. It's the only global datum, and the current standard in most geospatial contexts. The Global Positioning System uses this datum, and coordinates you find in places like Wikipedia and Google Earth use it. It is very, very close to NAD83, with less than 2 m difference in most of North America; but it gets a little worse every year, thanks to plate tectonics!

OK, that's enough about datums. To sum up: always ask for the datum. If you're generating geospatial information, always give the datum. You might not care too much about it today, but Evan and I have spent the better part of two days trying to unravel the locations of wells in Nova Scotia so trust me when I say that one day, you will care!

Disclaimer: we are not geodesy specialists, we just happen to be neck-deep in it at the moment. If you think we've got something wrong, please tell us! Map licensed CC-BY by Wikipedia user Alexrk2 — thank you! Public domain image of Earth from Apollo 17.

Except where noted, this content is licensed

Except where noted, this content is licensed