Digitalization... of what?

/I've been hearing a lot about 'digitalization', or 'digital transformation', recently. What is this buzzword?

The general idea seems to be: exploit lots and lots of data (which we already have), with analytics and machine learning probably, to do a better job finding and producing fuel and energy safely and responsibly.

At the centre of it all is usually data. Lots of data, usually in a lake. And this is where it all goes wrong. Digitalization is not about data. And it's not about technology either. Or cloud. Or IoT.

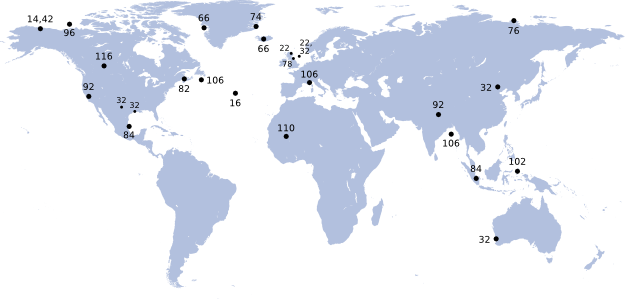

Interest in the terms "digital transformation" and "digitalization" since 2004, according to Google Trends. The data reveal a slight preference for the term "digitalization" in central and northern Europe. Google Ngram Viewer indicates that the term "digitalization" has been around for over 100 years, but it is also a medical term connected with the therapeutic use of digitalis. Just to be clear, that's not what we're talking about.

It's about people

Oh no, here I go with the hand-wavy, apple-pie "people not process" nonsense... well, yes. I'm convinced that it's humans we're transforming, not data or technology. Or clouds. Or Things.

I think it's worth spelling out, because I think most corporations have not grasped the human aspect yet. And I don't think it's unreasonable to say that petroleum has a track record of not putting people at the centre of its activities, so I worry that this will happen again. This would be bad, because it might mean that digitalization not only fails to get traction — which would be bad enough because this revolution is long overdue — but also that it causes unintended problems.

Without people, digital transformation is just another top-down 'push' effort, with too much emphasis on supply. I think it's smarter to create demand, or 'pull', so that professionals are asking for support, and tools, and databases, and are engaged in how those things are created.

Put technical professionals at the heart of the revolution, and the rest will follow. The inverse is not true.

Strategies

This is far from an exhaustive list, but here are some ideas for ways to get ahead in digital transformation:

- Make it easy for digitally curious people to dip a toe in. Build a beginner-friendly computing environment, and encourage people to use it. Challenge your IT people to support a culture of experimentation and creativity.

- Give those curious professionals access to professional development channels, whether it's our courses, other courses, online channels like Lynda.com or Coursera, or whatever.

- Build a community of practice for 'scientific computing'. Whether it's a Yammer group or something more formal, be sure to encourage frequent face-to-face meetups, and perhaps an intranet portal.

- Start to connect subsurface professionals with software engineers, especially web programmers and data scientists, elsewhere in the organization. I think the best way is to embed programmers into technical teams.

- Encourage participation in external channels like conferences and publications, data science contests, hackathons, open source projects, and so on. I guarantee you'll see a step change in skills and enthusiasm.

The bottom line is that we're looking for a profound culutral change. It will be slow. More than that, it needs to be slow. It might only take a year or two to get traction for an idea like "digital first". But deeper concepts, like "machine readable microservices" or "data-driven decisions" or "reproducible workflows", must take longer because you can't build that high without a solid foundation. Successfully applying specific technologies like deep learning, augmented reality, or blockchain, will certainly require a high level of technology literacy, and will take years to get right.

What's going on with scientific computing in your organization? Are you 'digitally curious'? Do you feel well-supported? Do you know others in your organization like you?

The circuit board image in the thumbnail for this post is by Carl Drougge, licensed CC-BY-SA.

Except where noted, this content is licensed

Except where noted, this content is licensed