Future proof

/Last week I wrote about the turmoil many subsurface professionals are experiencing today. There’s no advice that will work for everyone, but one thing that changed my life (ok, my career at least) was learning a programming language. Not only because programming computers is useful and fun, but also because of the technology insights it brings. Whether you’re into data management or machine learning, workflow automation or just being a more rounded professional, there really is no faster way to build digital muscles!

Six classes

We have six public classes coming up in the next few weeks. But there are thousands of online and virtual classes you can take — what’s different about ours? Here’s what I think:

All of the instructors are geoscientists, and we have experience in sedimentology, geophysics, and structural geology. We’ve been programming in Python for years, but we remember how it felt to learn it for the first time.

We refer to subsurface data and typical workflows throughout the class. We don’t use abstract or unfamiliar examples. We focus 100% on scientific computing and data visualization. You can get a flavour of our material from the X Lines of Python blog series.

We want you to be self-sufficient, so we give you everything you need to start being productive right away. You’ll walk away with the full scientific Python stack on your computer, and dozens of notebooks showing you how to do all sorts of things from loading data to making a synthetic seismogram.

Let’s look at what we have on offer.

Upcoming classes

We have a total of 6 public classes coming up, in two sets of three classes: one set with timing for North, Central and South America, and one set with timing for Europe, Africa, and the Middle East. Here they are:

Intro to Geocomputing, 5 half-days, 15–19 Feb — 🌎 Timing for Americas — 🌍 Timing for Europe & Africa — If you’re just getting started in scientific computing, or are coming to Python from another language, this is the class for you. No prerequisites.

Digital Geology with Python, 4 half-days, 22–25 Feb — 🌍 Timing for Europe & Africa — A closer look at geological workflows using Python. This class is for scientists and engineers with some Python experience.

Digital Geophysics with Python, 4 half-days, 22–25 Feb — 🌎 Timing for Americas — We get into some geophysical workflows using Python. This class is for quantitative scientists with some Python experience.

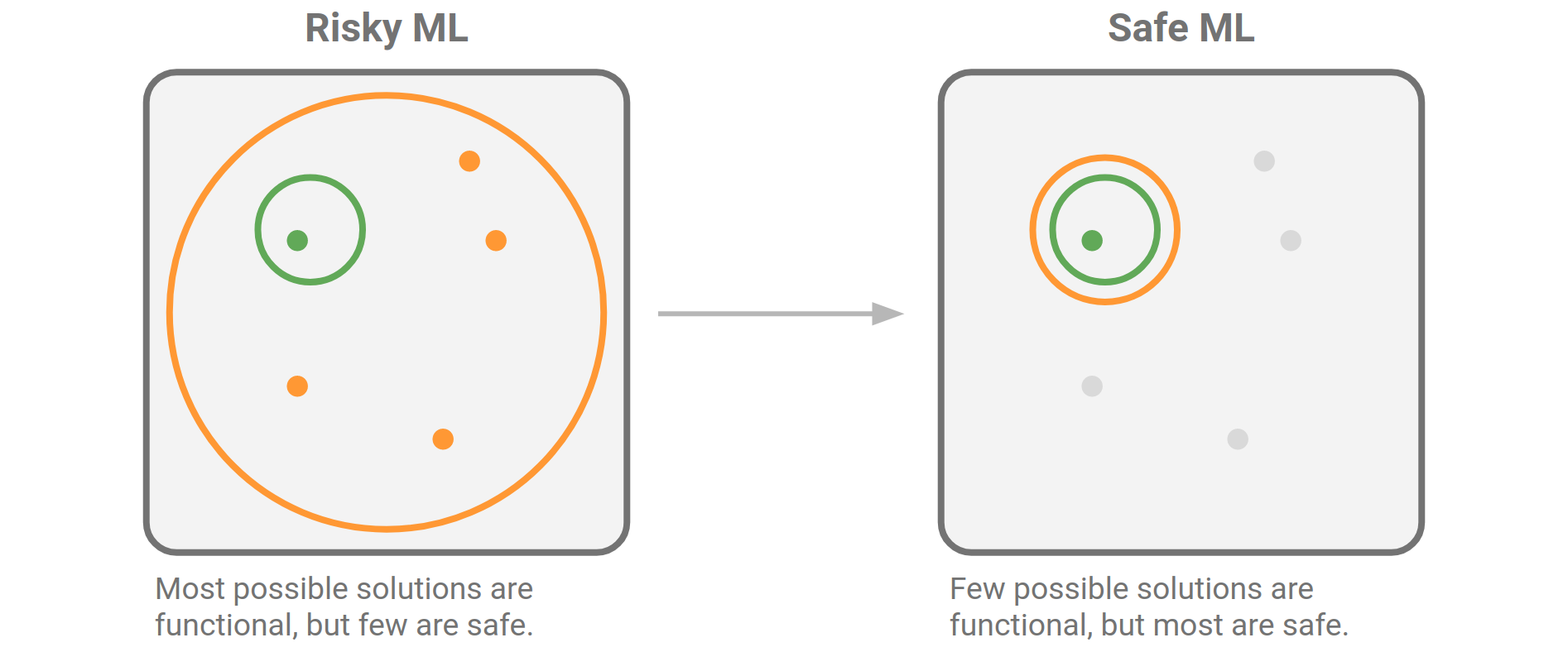

Machine Learning for Subsurface, 4 half-days in March — 🌎 Timing for Americas (1–4 Mar) — 🌍 Timing for Europe & Africa (8–11 Mar) — The best way into machine learning for earth scientists and subsurface engineers. We give you everything you need to manage your data and start exploring the world of data science and machine learning.

Follow the links above to find out more about each class. We have space for 14 people in each class. You find pricing options for students and those currently out of work. If you are in special circumstances, please get in touch — we don’t want price to be a barrier to these classes.

In-house options

If you have more than about 5 people to train, it might be worth thinking about an in-house class. That way, the class is full of colleagues learning things together — they can speak more openly and share more freely. We can also tailor the content and the examples to your needs more easily.

Get in touch if you want more info about this approach.

Except where noted, this content is licensed

Except where noted, this content is licensed