Bring it into time

/A student competing in the AAPG's Imperial Barrel Award recently asked me how to take seismic data, and “bring it into depth”. How I read this was, “how do I take something that is outside my comfort zone, and make it fit with what is familiar?” Geologists fear the time domain. Geology is in depth, logs are in depth, drill pipe is in depth. Heck, even X and Y are in depth. Seismic data relates to none of those things; useless right?

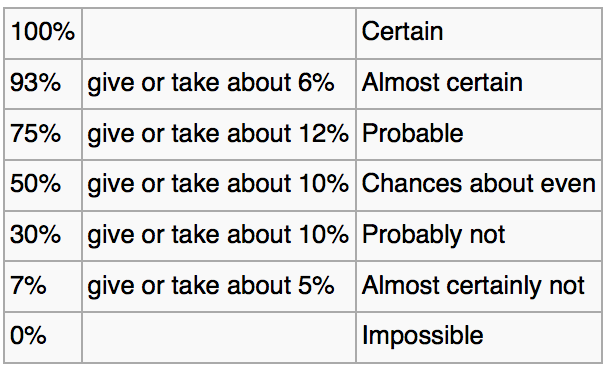

It is excusable for the under-initiated, but this concept of “bringing [time domain data] into depth” is an informal fallacy. Experienced geophysicists understand this because depth conversion, in all of its forms and derivatives, is a process that introduces a number of known unknowns. It is easier for others to be dismissive, or ignore these nuances. So early-onset discomfort with the travel-time domain ensues. It is easier to stick to a domain that doesn’t cause such mental backflips; a kind of temporal spatial comfort zone.

Linear in time

However, the unconverted should find comfort in one property where the time domain is advantageous; it is linear. In contrast, many drillers and wireline engineers are quick to point that measured depth is not nessecarily linear. Perhaps time is an even more robust, more linear domain of measurement (if there is such a concept). And, as a convenient result, a world of possibilities emerge out of time-linearity: time-series analysis, digital signal processing, and computational mathematics. Repeatable and mechanical operations on data.

Boot camp in time

The depth domain isn’t exactly omnipotent. A colleague, who started her career as a wireline-engineer at Schlumberger, explained to me that her new-graduate training involved painfully long recitations and lecturing on the intricacies of depth. What is measured depth? What is true vertical depth? What is drill-pipe stretch? What is wireline stretch? And so on. Absolute depth is important, but even with seemingly rigid sections of solid steel drill pipe, it is still elusive. And if any measurement requires a correction, that measurement has error. So even working in the depth domain data has its peculiarities.

The depth domain isn’t exactly omnipotent. A colleague, who started her career as a wireline-engineer at Schlumberger, explained to me that her new-graduate training involved painfully long recitations and lecturing on the intricacies of depth. What is measured depth? What is true vertical depth? What is drill-pipe stretch? What is wireline stretch? And so on. Absolute depth is important, but even with seemingly rigid sections of solid steel drill pipe, it is still elusive. And if any measurement requires a correction, that measurement has error. So even working in the depth domain data has its peculiarities.

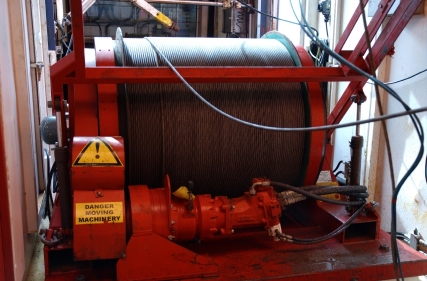

Few of us ever get the privilege of such rigorous training in the spread of depth measurements. Sitting on the back of the rhetorical wireline truck, watching the coax-cable unpeel into the wellhead. Few of us have lifted a 300 pound logging tool, to feel the force that it would impart on kilometres of cable. We are the recipients of measurements. Either it is a text file, or an image. It is what it is, and who are we to change it? What would an equvialent boot camp for travel-time look like? Is there one?

In the filtered earth, even the depth domain is plastic. Travel-time is the only absolute.

Except where noted, this content is licensed

Except where noted, this content is licensed